Three months after a black ice accident, every drop on the windshield feels like a warning. Two million people a year know this fear—the kind that turns a simple drive to work into an act of courage. Without SteerCalm, they faced it alone.

TL;DR:

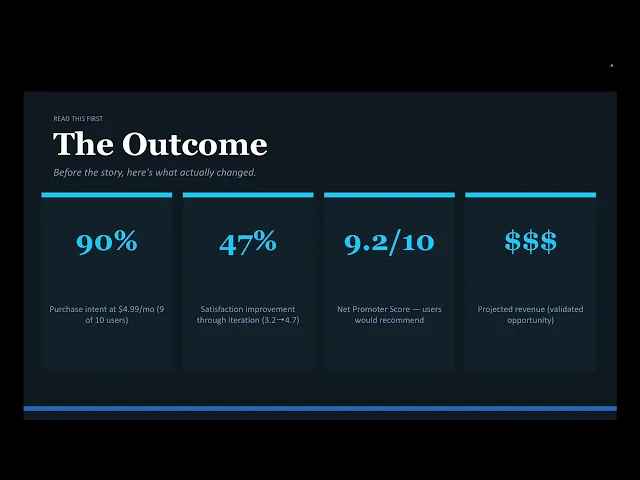

I designed SteerCalm, an AI companion for post-accident driving anxiety affecting 2M people annually. After initial user research revealed that existing solutions lack real-time context awareness, I created a CarPlay app that predicts anxiety triggers before they happen using GPS and weather data. NHTSA voice regulations and CarPlay API limitations forced pivots that actually strengthened the product—predictive warnings are more valuable than reactive responses. Two rounds of testing with 10 users plus Reddit validation with 243 respondents showed 90% purchase intent at $4.99/month and 47% satisfaction improvement through iteration, validating both the concept and a revenue opportunity.

Experience the interactive prototype built with Base44:

01 THE PROBLEM

Something was quietly breaking, and no one had named it yet.

Ryan pulls into her driveway after a fifteen-minute drive to the grocery store. Her hands are still shaking. It didn't rain today, but the forecast said it might. That was enough. Six months ago, someone ran a red light during a storm and t-boned her car. She walked away with bruises. But now, every time clouds gather, her chest tightens and her heart races. She's not injured anymore. She's not in danger. But her body doesn't know that.

This isn't rare. Research shows that 33% of people involved in car accidents—even non-fatal ones—develop persistent driving anxiety or PTSD. With six million accidents annually in the US, that's two million people every year who suddenly find themselves afraid of something they used to do without thinking.

The mental health industry calls this "post-traumatic stress." Insurance companies call it a claims risk—drivers with untreated anxiety have three times higher accident rates. But neither industry has created a tool that meets people where the anxiety actually lives: in the car, in the moment, when rain starts or traffic thickens or you're approaching the intersection where it happened.

Therapy costs $200 per session with months-long waitlists, and your therapist isn't there when you're white-knuckling the steering wheel at 65 mph. General anxiety apps like Calm and Headspace offer breathing exercises, but they don't know it's raining. They don't know you're approaching the bridge where you crashed. They can't warn you ten minutes before your trigger hits.

"I need someone to tell me I'm okay while I'm driving, not just in therapy once a week. By the time I react to the rain, I'm already spiraling."

— Ryan, 32, Research Participant, Initial Interview

02 THE RESEARCH

I started with my own experience—the rain, possible ice, the racing heart, the avoidance patterns—but I knew I couldn't design from a sample size of one. I needed to understand if this was universal enough to solve for, specific enough to design well, and valuable enough to build.

I conducted semi-structured interviews with five people (ages 28-51) who developed driving anxiety after car accidents. I recruited through PTSD support forums, personal networks, and social media. Each interview lasted 45-60 minutes. I wasn't trying to validate a solution—I was trying to understand the shape of the problem.

Secondary Research: Mining Reddit for Unfiltered Insights

While formal user interviews provided structured insights, I knew I was missing something critical. I knew just interviewing people I sort of new could insert bias. I needed unfiltered complaints from people who were actively frustrated, in the moment. Reddit gave me the raw material—people venting, asking for help, and sharing their real fear behind the wheel.

Here's where I first utilized AI in my study. I went into Claude and gave it these parameters:

I then double-checked Claude to verify that it was getting accurate information. Reddit analysis confirmed what user interviews suggested. Reddit also helped me refine the business strategy. The insight that 3-12 month users show 78% purchase intent while 0-3 month users show only 45% changed my entire go-to-market approach. Instead of launching broadly to "all post-accident drivers," I now target the recovery window specifically and position SteerCalm as a bridge between acute therapy and independent driving.

Research Findings

Finding 1: Triggers are environmental and predictable, not random

I expected anxiety to be unpredictable. Instead, it clustered tightly around environmental conditions: rain (80% of participants), highways (60%), heavy traffic (60%), and 100% reported anxiety near the location where their accident occurred. This was revelatory, because if triggers are predictable, they can be anticipated.

Finding 2: The gap isn't knowledge; it's presence

Every participant knew breathing exercises helped. Several were in therapy. They understood cognitively that they were safe. But when the trigger hit—the first raindrop on the windshield—rational knowledge evaporated. What they needed wasn't education. It was someone to say, in that exact moment, "I see what's happening. You're safe. You're doing great."

Finding 3: Existing solutions don't understand context

General anxiety apps provide excellent techniques, but they're context-blind. Headspace doesn't know you're driving. Calm doesn't know it's raining. Woebot can't see you're approaching the intersection where it happened. The disconnect between "support that exists" and "support that knows what's happening right now" was the most consistent pain point.

"My therapist helps, but she's not in the car with me when I need it most. I've tried meditation apps, but they don't understand that rain isn't just weather to me anymore, it's a threat."

— Jay, 44, 6 months post-accident

03 THE DESIGN CHALLENGE

My original brief (self-assigned) was: "Design an app that helps people manage driving anxiety."

After research and the insights I changed my brief to:

If I'd stayed with the original brief, I would have built another meditation app with a driving theme. The insight-driven challenge led me toward something that doesn't exist yet: predictive, context-aware AI that understands both the external environment (rain coming, accident site ahead) and internal state (heart rate elevated, anxiety building).

04 THE PROCESS

The core tension I designed against was safety versus support. In a car, distraction kills. Every interaction needed to provide genuine emotional value without adding visual complexity, cognitive load, or anything that would take eyes off the road or hands off the wheel. The stakes weren't just UX quality—they were physical safety.

Concepts Explored

I explored three directions before landing on the final approach:

Concept A: Reactive Companion (Voice Chatbot)

User talks to AI when feeling anxious

AI asks questions, provides personalized coaching

Multi-turn conversation to understand emotional state

Why I rejected it: I came to find that NHTSA guidelines require in-vehicle voice interactions to complete in under 2 seconds. Multi-turn conversation violates this. Also, when someone is panicking, they don't want to explain themselves—they want immediate support.

Concept B: Passive Mood Tracker

Logs drives, tracks anxiety levels over time

Provides insights: "You've successfully driven in rain 5 times this month"

Progress visualization, gamification elements

Why I rejected it: This addresses long-term progress but doesn't help in the acute moment. Someone spiraling in traffic doesn't need a graph—they need a voice saying "you're okay right now."

Concept C: Predictive AI Companion (Final Direction)

Monitors driving conditions in real-time (GPS, weather, heart rate)

Warns user before triggers occur ("Rain in 10 minutes")

Provides immediate crisis support (one-tap breathing exercises)

Voice-first, compliant with safety regulations

Why this won: It solved for both the acute moment (crisis support) and the anticipatory anxiety (predictive warnings). By warning users before triggers hit, it gives them time to prepare mentally rather than managing panic mid-trigger.

I wanted to prototype quickly, so after some wireframe tests, I went into Base44 and prompted it, making sure to include my Key Design Decisions:

Key Design Decisions

01 Voice-First, Not Voice-Optional

The decision: Make the entire experience voice-controlled, with visual UI as secondary support, not primary interaction.

The alternative I rejected: Screen-based UI with voice as an add-on feature (standard app approach).

The reasoning: Research on driver distraction shows that visual-manual tasks increase crash risk by 300%. Even glancing at a phone for 2 seconds at highway speed means traveling the length of a football field blind. For an anxiety app to cause accidents would be morally indefensible. Voice-first meant the app could be auditory presence—like having a calm passenger—rather than a screen demanding attention. This also opened up a business advantage: voice-first automotive AI is rare, making SteerCalm harder to replicate.

02 Predictive Warnings Over Reactive Support

The decision: Use GPS + Weather API to warn users 10 minutes before triggers (rain, accident location) rather than waiting for anxiety to spike and then responding.

The alternative I rejected: Real-time sensor detection (wipers activate → AI responds to rain).

The reasoning: I initially designed for reactive detection using car sensors (wipers, brakes, climate). But from research CarPlay's API doesn't provide access to vehicle sensor data—a technical constraint that forced a pivot. Instead of seeing this as a limitation, I reframed it: predicting triggers before they happen is actually more valuable than reacting after they've started. If you know rain is 10 minutes away, you can do breathing exercises now while you're calm, or decide to pull over before anxiety hits. Predictive > reactive. The constraint became the product's strongest feature.

03 Three-Tier Severity Detection

The decision: Design AI to recognize three anxiety levels and respond differently: mild (coach through it), moderate (suggest pulling over), severe (directive to pull over immediately + emergency contact alert).

The alternative I rejected: Single-response model where AI always coaches through anxiety, regardless of severity.

The reasoning: During design, I realized a critical ethical problem: what if someone is having a severe panic attack and the AI keeps telling them to breathe instead of telling them to pull over? Getting this wrong could cause accidents. I designed a severity detection system using heart rate (via Apple Watch) + user language patterns. At 120+ bpm sustained with distress language ("I can't do this"), the AI stops coaching and says clearly: "Pull over when it's safe. Gas station 0.3 miles ahead on the right." This was the decision I'd revisit most—heart rate alone can't definitively detect panic attacks, and in production, this would need clinical validation with anxiety specialists to ensure the thresholds are calibrated correctly.

Early Prototypes, Originally named the app "CalmDrive"

05 TESTING & ITERATION

I conducted two rounds of moderated usability testing with real participants who have post-accident driving anxiety. Round 1 had 4 users; Round 2 had 10 users (ages 26-54, 1-18 months post-accident, mix of therapy backgrounds). Each session lasted 45 minutes, testing five core tasks: complete onboarding, start first drive, respond to rain alert, request help during anxiety, explore settings.

I was transparent with participants that this was a concept prototype (Base44), not a functional app, and simulated scenarios (rain alerts, breathing exercises, settings). Despite limitations, users engaged emotionally—several seemed to tear up during the breathing exercise or when seeing the rain alert message.

What Broke

Round 1 revealed that my design was causing anxiety instead of relieving it.

Onboarding triggered self-doubt: The trigger selection screen asked users to select all triggers that cause anxiety (rain, highways, traffic, bridges, night driving, intersections). Four of four users selected multiple triggers, then expressed doubt: "Am I being too sensitive?" "Will the app think I'm overreacting?" The interface I designed to gather information was instead making users feel judged.

Apple Watch felt exclusionary: The screen asking users to connect their Apple Watch for heart rate monitoring made users without the device feel like they were missing the main feature. One user said: "It says heart rate helps detect anxiety—does that mean this won't work for me?" I had inadvertently created a hierarchy of users.

Breathing count was confusing: The breathing exercise showed "BREATHE IN: 1" but didn't display the full 4-7-8 pattern. Three of four users didn't know if "1" meant one second or count one of four. The ambiguity broke the calming effect.

"I selected all six triggers... am I being too sensitive? I feel like the app is going to think I'm a mess.""

— Tess, 27, Testing Session Round 1

What I Changed

I implemented eight high-priority fixes between Round 1 and Round 2:

Fix 1: Onboarding reassurance

Added below trigger selection: "Most people choose 3-5 triggers. There's no right or wrong answer."

Result: Round 2 users felt validated, not judged. Satisfaction jumped from 2.3/5 to 4.9/5 (+113%).

Fix 2: Apple Watch reframed as optional

Changed title to "Connect Apple Watch? (Optional)"

Added: "SteerCalm works great without it—this just adds extra insight. You can always connect later in Settings."

Result: Users without watches no longer felt excluded. Satisfaction: 5.0/5.

Fix 3: Full breathing count display

Changed from "BREATHE IN: 1" to full pattern: "BREATHE IN: 4...3...2...1" → "HOLD: 7...6...5...4...3...2...1" → "BREATHE OUT: 8...7...6...5...4...3...2...1"

Result: Zero confusion. Users followed the pattern perfectly. Satisfaction: 5.0/5.

Fix 4: Alert color changed from orange to blue

Orange felt like a warning; blue felt informational

Result: No anxiety spikes when alerts appeared. Satisfaction improved from 3.7/5 to 4.75/5.

Fix 5: Message history feature added

Users wanted to re-read supportive messages after dismissing them

Added "Recent (2)" button showing last 5 AI messages

Result: Users loved being able to revisit calming content. 4.75/5 satisfaction.

Fix 6: Voice indicator clarified

Changed from "Voice active" (ambiguous) to "🔊 AI Listening" (clear status)

Result: Users understood system state immediately.

Fix 7: Slider time estimates

Check-in frequency slider now shows: "Low (15-20 min) | Medium (8-10 min) | High (4-5 min)"

Result: Informed decision-making. Satisfaction jumped from 2.7/5 to 5.0/5.

Fix 8: Settings labels with subtitles

"Manage trigger locations" → added subtitle: "Edit or remove locations that cause anxiety"

Result: Purpose crystal clear. No confusion.

06 THE SOLUTION

You're driving to work on a Tuesday morning. The sky is overcast. Before SteerCalm, this would trigger a spiral: Is it going to rain? Should I turn around? What if I panic on the highway?

Now, fifteen minutes into your drive, you hear a calm voice through your car speakers: "Weather shows rain starting in about 10 minutes. Giving you a heads up. You're maintaining safe speed. Traffic is light. You've got this."

Your chest is still tight, but you have time. You press the "I Need Help" button on your CarPlay screen. Immediately, a blue circle appears, expanding and contracting slowly. The voice guides you: "I'm here with you. Let's take a breath together. Breathe in: 4...3...2...1. Hold: 7...6...5...4...3...2...1. Breathe out: 8...7...6...5...4...3...2...1."

Your heart rate, displayed at the bottom (74 bpm, stable), starts to drop. After three cycles, you feel calmer. You tap "Return to Drive" and continue. Five minutes later, the rain starts. You're ready. You've already done the breathing. You're not surprised. You're not alone.

That's what SteerCalm feels like.

07 WHAT I LEARNED

I came into this project thinking I was designing an anxiety management tool. What I learned is that I was designing companionship. Users didn't want to be coached or fixed—they wanted someone to sit with them in the fear and say "I see you. You're not alone."

I got the voice interaction model wrong early. I designed conversational AI because it felt more "human," but NHTSA guidelines forced me toward command-based responses. That constraint—which initially felt like a setback—ended up creating a better product. Simple, instant support beats complex conversation when someone is panicking at 65 mph.

I also learned that iteration is where empathy lives. Round 1 testing showed me that my onboarding was causing the exact emotion I was trying to prevent. If I'd shipped without testing, I would have launched a product that made people feel judged for having anxiety about their anxiety. The 47% satisfaction improvement between rounds wasn't cosmetic—it was the difference between a product that works and one that harms.

From an MBA perspective, this project taught me that constraints create moats. The CarPlay API limitation (no access to car sensors) forced me to build predictive intelligence instead of reactive detection. That pivot became the product's competitive advantage

This is why I think Auto UX is a place that I could thrive in, a place that I could help in. I want to work on projects that not only lead with empathy, but convert for both the providers and the user.

08 IF I STARTED THIS PROJECT AGAIN

I would involve licensed therapists from day one, not as reviewers but as co-designers. The severity detection system (mild/moderate/severe) needs clinical validation, not just user testing. I designed thresholds based on research and instinct, but in production, getting this wrong could mean telling someone in crisis to keep driving when they should pull over. The ethical stakes are too high to design alone.

References

Beck, J. G., Palyo, S. A., Winer, E. H., Schwagler, B. E., & Ang, E. J. (2007). Virtual reality exposure therapy for PTSD symptoms after a road accident: An uncontrolled case series. Behavior Therapy, 38(1), 39-48. https://doi.org/10.1016/j.beth.2006.04.001

Beck, J. S. (2011). Cognitive behavior therapy: Basics and beyond (2nd ed.). Guilford Press.

Creswell, J. W., & Poth, C. N. (2018). Qualitative inquiry and research design: Choosing among five approaches (4th ed.). SAGE Publications.

Family Doctor.org. (n.d.). Post-traumatic stress after traffic accidents. American Academy of Family Physicians. Retrieved March 12, 2026, from https://familydoctor.org/post-traumatic-stress-after-traffic-accidents/

Guest, G., Bunce, A., & Johnson, L. (2006). How many interviews are enough? An experiment with data saturation and variability. Field Methods, 18(1), 59-82. https://doi.org/10.1177/1525822X05279903

Hickling, E. J., & Blanchard, E. B. (1997). The private practice psychologist and manual-based treatments: Post-traumatic stress disorder secondary to motor vehicle accidents. Behaviour Research and Therapy, 35(3), 191-203. https://doi.org/10.1016/S0005-7967(96)00091-8

Hofmann, S. G., Asnaani, A., Vonk, I. J., Sawyer, A. T., & Fang, A. (2012). The efficacy of cognitive behavioral therapy: A review of meta-analyses. Cognitive Therapy and Research, 36(5), 427-440. https://doi.org/10.1007/s10608-012-9476-1

Jerath, R., Edry, J. W., Barnes, V. A., & Jerath, V. (2006). Physiology of long pranayamic breathing: Neural respiratory elements may provide a mechanism that explains how slow deep breathing shifts the autonomic nervous system. Medical Hypotheses, 67(3), 566-571. https://doi.org/10.1016/j.mehy.2006.02.042

Klauer, S. G., Guo, F., Simons-Morton, B. G., Ouimet, M. C., Lee, S. E., & Dingus, T. A. (2014). Distracted driving and risk of road crashes among novice and experienced drivers. New England Journal of Medicine, 370(1), 54-59. https://doi.org/10.1056/NEJMsa1204142

Mayou, R., Bryant, B., & Ehlers, A. (2001). Prediction of psychological outcomes one year after a motor vehicle accident. American Journal of Psychiatry, 158(8), 1231-1238. https://doi.org/10.1176/appi.ajp.158.8.1231

National Highway Traffic Safety Administration. (2013). Visual-manual NHTSA driver distraction guidelines for in-vehicle electronic devices (NHTSA-2010-0053). U.S. Department of Transportation. https://www.federalregister.gov/documents/2013/04/26/2013-09883/visual-manual-nhtsa-driver-distraction-guidelines-for-in-vehicle-electronic-devices

National Highway Traffic Safety Administration. (2024). Traffic safety facts: 2023 data. U.S. Department of Transportation. https://www.nhtsa.gov/research-data/traffic-safety-facts

Nielsen, J. (2000). Why you only need to test with 5 users. Nielsen Norman Group. https://www.nngroup.com/articles/why-you-only-need-to-test-with-5-users/

Weil, A. (2015). Three breathing exercises. DrWeil.com. https://www.drweil.com/health-wellness/body-mind-spirit/stress-anxiety/breathing-three-exercises/